- Big Machines

- Posts

- 🧨 OpenAI's House of Dynamite, Google's wicked hallucinations & Altman no longer wants a Tesla

🧨 OpenAI's House of Dynamite, Google's wicked hallucinations & Altman no longer wants a Tesla

Plus... A bipartisan bill to prevent teens from using chatbots, Samsung and Nvidia strike a "megafactory" mega deal, and "is the bubble about to burst?" (snore).

📰 Welcome back!

Welcome back to Big Machines – the AI newsletter that absolutely no one should take seriously. Ever. For legal reasons.

It’s a message our legal team made us run, and for good reason: this week’s edition is absolutely chock full of salacious headlines from the world of AI, including Google hallucinations that claimed a right-wing grifter was a pedo, legal efforts to ban minors from using chatbots in the US, and AI outing a school kid for domestic terrorism offenses.

But they don’t come more explosive than the allegations OpenAI co-founder Ilya Sutskever has made in the Musk-Altman court showdown, about the shitty state of affairs the company’s leadership was in, and how it led to the ousting of Sam Altman (once), back in 2023.

John Grisham, eat ya fucking heart out.

🚀 What we’re covering today…

🧨 Ilya’s explosive OpenAI deposition

⚖️ Google AI defames Robby Starbuck

🏛 US legislators u18 chatbot ban

👊 OpenAI knocks out 40 nefarious networks

🏭️ Samsung & Nvidia strike AI manufacturing deal

📱Nvidia announce $1B investment in Nokia

💰️ ChatGPT & PayPal introduce in-app purchasing

🫧 US Federal Chair denies AI bubble claims

🌐 AI infrastructure to reach $3-4 TRILLION by 2030

🚗 Altman cancels 2018 Tesla order (obviously)

🔴 Quick Note: We like to cover loads of AI news in our newsletter, so for a better reading experience, we suggest opening this in your browser for the full experience!

Head to the ‘READ ONLINE’ tab at the top of this email.

👁️ 👁️ What you might have missed

We’re going to kick-off this week with absolute DYNAMITE. In what reads like a teleplay from HBO’s Succession, a deposition from OpenAI co-founder Ilya Sutskever being used in the Musk v. Altman et al. court case, has been leaked. The deposition from the case, which concerns “breach of founding mission” has made its way into public domain, and seriously spills the tea about key events in the company’s history, including the dramatic firing of CEO Sam Altman, back in November 2023.

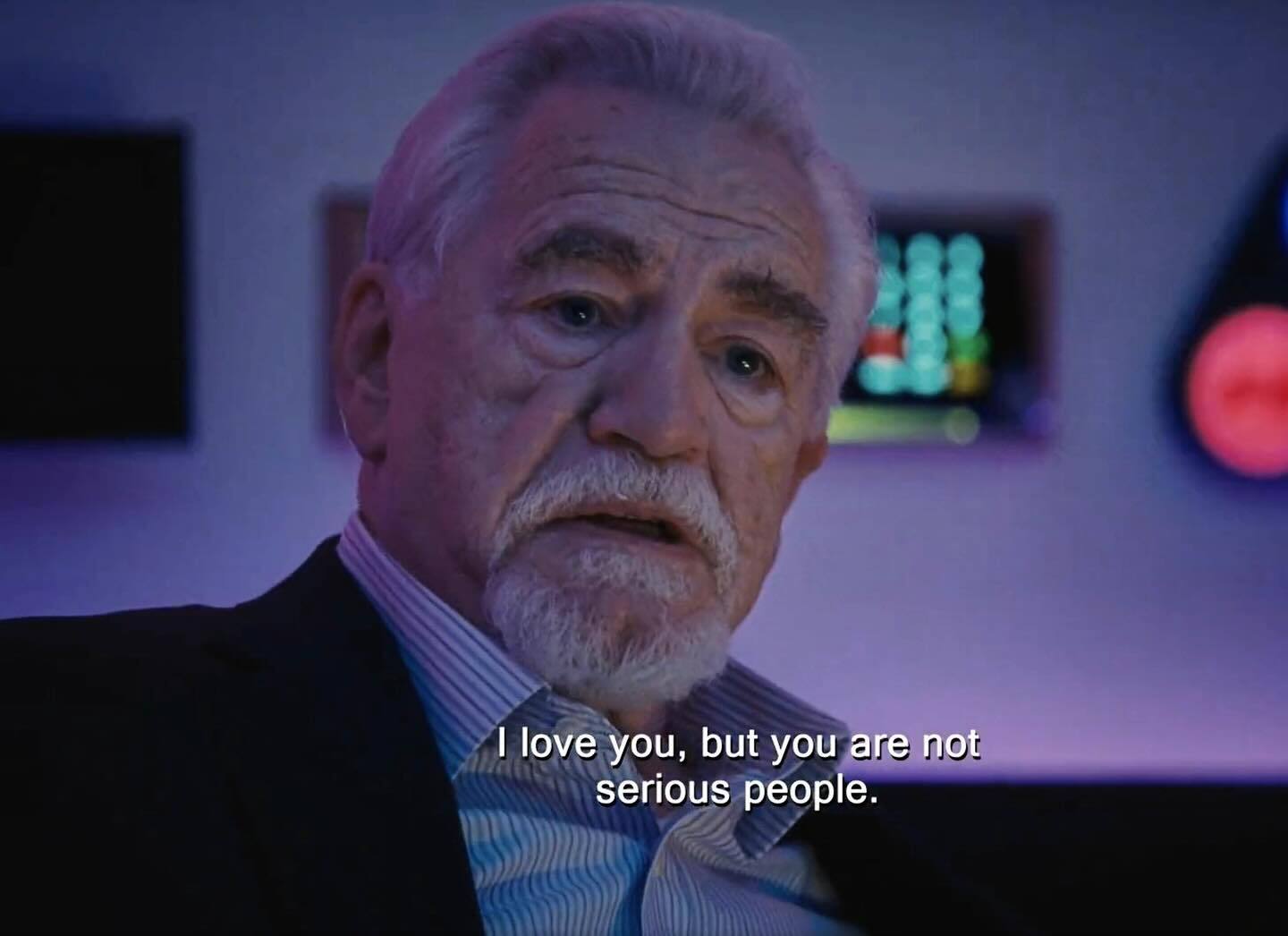

The verdict? “You are not serious people.”

According to a not-so-secret 52-page memo, the coup was planned for over a year, with co-founding Sutskever alleging Altman was a pretty shitty boss, who’d persistently engage in a pattern of lying and manipulation during his first stint as leader of the San Francisco startup between 2019 and 2023. The doc accused Altman of pitting executives against each other, undermining colleagues, and creating chaos – echoing concerns that led to his removal from Y Combinator. Much of the evidence was provided by then-CTO Mira Murati.

And it gets better: the deposition disclosed that OpenAI's board considered merging with rival Anthropic just one day after Altman got the heave-ho, with Anthropic's leadership potentially taking control. Sutskever claims to have opposed the merger, however, but that other OpenAI execs were more enthusiastic on some real adults stepping in to take charge.

It’s likely Musk, OpenAI’s co-founder and lead plaintiff in the case, will be salivating, especially after the court ordered Sutskever to produce a separate "Brockman memo" criticizing OpenAI president Greg Brockman, who is accused alongside Altman of “misleading” Musk to secure early funding in the startup back in 2016.

A second deposition about Brockman’s financial interests in OpenAI is likely to expose the culture of deep mistrust that caused the 2023 leadership crisis, and provide us with yet another juicy headline that takes us one step closer to becoming the gossip column we’ve always dreamed of being.

From Ilya’s deposition—

• Ilya plotted over a year with Mira to remove Sam

• Dario wanted Greg fired and himself in charge of all research

• Mira told Ilya that Sam pitted her against Daniela

• Ilya wrote a 52 page memo to get Sam fired and a separate doc on Greg— toucan (@distributionat)

10:02 AM • Nov 2, 2025

If you’ve ever embarked on the odd psychedelic voyage, you’d know the most powerful – and often painful – mirage of the mind you’ll encounter is one that reveals an uncomfortable truth of the self. Well, not if you’re the American conservative activist Robby Starbuck, who in real life has had to challenge the very vivid hallucinations of AI models telling people he’s a great, big, dirty pedophile.

That’s right, Google’s AI tools – including Bard, Gemini and Gemma – have repeatedly generated false accusations linking to sexual assault, child rape and financial misconduct since 2023, and as a result, the right-wing social media pundit has brought a defamation lawsuit against Google LLC in Delaware, for at least US$15 million.

The suit claims one AI model stated that nearly 2.8 million unique users were shown the false content, and Google failed to take action, despite Starbuck sending multiple cease-and-desist demands. Google responded that ‘hallucinations’ are a known issue in large-language models and the company is working to reduce them. Namaste.In more ‘why-aren’t-we-already-doing-this news’, US Senators Josh Hawley (R) and Richard Blumenthal (D) have introduced the bipartisan 'Guard Act”, which will regulate AI chatbots to protect minors. The proposed law would require chatbot companies to verify user ages and prohibit anyone under 18 from using AI companions. It would mandate bots to remind users every 30 minutes that they are not human and to clarify they do not offer medical, legal, financial or psychological services.

The proposed bill follows a spate of high-profile teen suicides, where parents of the dead teens believe AI chatbot dependency was a significant contributing factor. If developers fall afoul of the law, it will impose criminal penalties and fines for the creepy bastards who make them. Companion chatbot developer Character.AI, looks to be getting ahead of the curve, however, by blocking access to its app to under-18s – possibly a good barometer for how seriously the industry will take future legislation.OpenAI reports that since February 2024 it has disrupted and publicly reported over 40 networks using its models for harm – spanning scams, cyberattacks, influence operations and authoritarian-regime coercion. Their findings show threat actors are mostly applying AI to existing malicious playbooks rather than inventing entirely new ones (something, something AI/no new ideas) OpenAI emphasises its policy enforcement, account-banning, partner-sharing of intelligence and public transparency as its key tools.

But this hasn’t stopped the “new supercharged threat” posed by new GenAI tech. As celebrated image analysis and human perception expert, Hany Farid, puts it, domestic and international cybercriminals have “significantly ramped up the weaponization of deepfakes” thanks to the advancement and sophistication of models, such as Google’s Veo3 and OpenAI’s Sora 2. Check this fascinating insight into AI fuckery, and the myriad applications it has for criminal misuse, according to Farid.

We can’t tell the difference tbh. Source: Hany Farid

Samsung Electronics and Nvidia Corporation announced a joint initiative to build a next-generation “AI megafactory” that will use over 50,000 Nvidia GPUs to power semiconductor manufacturing. The AI system will span the entire production process – design, fabrication, operations, equipment, quality control – and eventually roll out to Samsung fabs worldwide, including the US. It signals a major shift towards AI-powered manufacturing, where the tech isn’t just produced, but deeply embedded into the process. It also underscores the strength of Nvidia and Samsung’s strategic relationship – and the growing stake the South Korean tech giant has in an AI manufacturing revolution. Anyone know how Apple doing, btw?

Nokia Corporation may have finally found a way to back relevancy again, via a super deal with Nvidia. Nokia has announced that Nvidia Corporation will invest $1 billion for a 2.9% stake – about 166 million new shares at ~$6 each. The partnership will focus on integrating Nvidia’s AI‐computing hardware into Nokia’s networking and data‐centre infrastructure, including next-gen 5G/6G and AI-powered telecom systems. Shares in Nokia surged more than 20%, reaching their highest level in nearly a decade. While the move positions Nokia as a major player in the European AI infrastructure wave, analysts caution that the real earnings impact may be years away because widespread deployment of AI-driven networks is still nascent.

ChatGPT users will soon be able to pay in-app, thanks to a new capability being introduced between PayPal and OpenAI. Under the agreement, PayPal will adopt the “Agentic Commerce Protocol” (ACP), linking its global merchant network to ChatGPT so consumers can go from conversation to checkout in just a few taps. The feature is expected to roll out in 2026, covering merchants across apparel, electronics, home goods and more. PayPal highlights that over 400 million users already shop via its platform, and OpenAI says hundreds of millions use ChatGPT weekly – the scale is undeniable, as is the fact it represents another chapter in the push-pull between legacy tech and industry 4.0 brands.

The US Federal Reserve Chair Jerome Powell has stated that the current surge in AI investment does not resemble the dot-com bubble of the late 1990s. He noted that many firms driving AI spending “actually have earnings” and legitimate business models, contrasting them with earlier speculative tech companies.He also recognised that the rapid build-out of AI infrastructure and spending is playing a role in US growth – just as well, with Google, Microsoft, Meta and Amazon all raising their forecasts for capital expenditures in their earnings, showing their hefty spending on artificial intelligence is only set to increase. Those pesky NWO peddlers at WEF urge caution however, saying “AI's market boom seems to be on course for a correction” owing to the “over reliance on a few highly visible firms”.

🧩 Other Bits

The level of tit-for-tat we’re here for: Sam Altman has attempted to cancel his 2018 reservation for the next-gen Tesla Roadster, saying the 7.5-year wait is “a long time to wait.” In response, Elon Musk insisted Altman did receive a refund within 24 hours and accused him of omitting that fact.

Goldman Sachs estimates global AI-related infrastructure spending could reach $3-4 trillion by 2030, driven by investments from major tech giants like Microsoft, Amazon, and Meta in data centers and computing infrastructure.

A 16-year-old student at Kenwood High School in Essex, Maryland was handcuffed after the school’s AI-based security system mistook his empty bag of chips for a gun and triggered a police response. The system flagged the item, prompting multiple officers to respond, only for the object to be confirmed as the poor fucker’s lunch.

Err, problematic? Source: We made it/Nano Banana 2.5

Meta CEO Mark Zuckerberg defended the company's $14.3 billion AI investment in Scale AI as part of its AI unit overhaul, stating 'we're seeing the returns' and clear patterns showing future returns on these expenditures.

📈 Trending tools, models & apps this week

📋 LLM Leaderboard

📲 Trending tools & apps

GitLaw – Where legal meets GitHub. GitLaw helps teams manage and version-control contracts, policies, and compliance documents the same way developers manage code. Think of it as Git for your legal ops — track edits, roll back changes, and collaborate without the chaos.

Extract by Firecrawl – The ultimate no-code data miner. Drop in any URL and Extract scrapes, cleans, and structures the data instantly. Perfect for researchers, content teams, and analysts who hate messy copy-paste jobs. It’s like ChatGPT had a baby with BeautifulSoup. We use this tool every day.

Postiz – A community-first social media scheduler that helps you automate posts across platforms, engage with your audience, and grow faster. Postiz brings a clean UI, AI-assisted captions, and open-source transparency — so you’re not locked into yet another SaaS black box.

💸 Financials

Major technology companies collectively spent $78 billion on AI infrastructure, research, and development over the past year, further accelerating AI innovation and market expansion across the sector.

AI startups have captured over 51% of all venture funding between January and October 2025, with private equity investment at record highs. Private AI investment jumped over 40% in 2024 to approximately $130 billion, raising speculation about an emerging 'AI bubble'.

Investors injected $73.6 billion into GenAI app startups in the first three quarters of 2025, with total investment across the GenAI ecosystem reaching $110.17 billion, marking a new 'golden age' of AI investment.

Thinking Machines Lab raised a massive $2 billion seed round, reaching a $10 billion valuation, marking one of the largest seed rounds in AI startup history.

Crusoe Energy Systems, which focuses on AI data center infrastructure, secured $1.38 billion in funding, achieving a valuation exceeding $10 billion. This represents one of the largest funding rounds in the AI infrastructure space.

Design platform Figma acquired AI-powered image and video generation company Weavy, rebranding it as Figma Weave. About 20 people from Weavy will join Figma to enhance media generation and editing capabilities with generative AI and professional tools.

San Francisco-based AI startup Poolside is aiming to raise $2 billion, with Nvidia reportedly investing up to $1 billion in the deal. The massive funding round highlights continued investor appetite for AI ventures.

LangChain, a platform for AI agent engineering, secured $125 million in funding at a $1.25 billion valuation, demonstrating strong investor interest in AI agent development platforms.

🕵️♂️ FREE ENTRY TO OUR INVITE-ONLY AI CHAT ON TELEGRAM…

If you share this newsletter with a friend and they actively sign up for the Big Machines newsletter, we will send you access to our invite-only Big Machines Telegram group, which is full of builders, investors, founders, and creators.

Access is now only granted to those who refer our newsletter to active subscribers, which means if you sign up on your work email, we will know, you sneaky bastards.

This would kill our open rate, so please don't do that, we beg.

👋 Here’s a nice story to get you through the week… see you next time.

A message to the OpenAI board.

Lots of love, Ilya x

Source: Succession/HBO

Till next week, folks. 👋

Sam, Grant, Matt, Mike and the Big Machines team.

✍️ How are we doing?We need your feedback to improve the information we give to you |

Reply